It would have been so awesome to rip out all our switching infrastructure and put in hardware that supports Multigagbit and UPOE.

Sadly, management didn’t want the network infrastructure projects to wipe out the entire IT budget. Good news, there is a simple way to take advantage of “more than gigabit” speeds with Meraki MR series access points and existing Cisco Catalyst switches. While installing about 100 APs, I encountered this quirk with Meraki MR52 (and MR53E) units “turning on” their LAG (Link Aggregation Group) functionality.

Link Aggregation

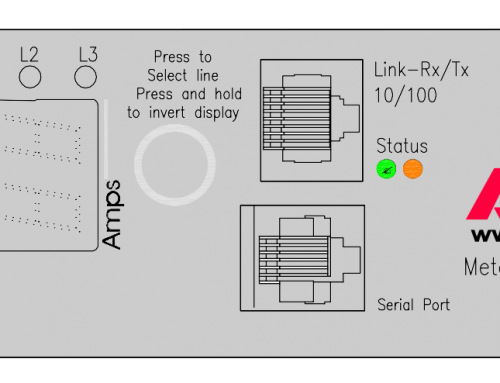

The two Ethernet uplinks on the MR52 can be configured for link aggregation, which relieve any existing uplink bottlenecks created by 802.11ac Wave 2.

Source: Meraki Datasheet – MR52

This article is going to focus on simply how to get this working since at the time of publishing this there isn’t much documented from Meraki regarding the best way to do it. We’re going to assume you already know what LAG / EtherChannels already are, and what they are used for. Also, it requires two Ethernet runs to every access point, which might not be feasible due to cabling limitations and switch port density restrictions.

Here’s the main take away.

Before you attempt to setup the switch EtherChannel side of things, fire up the Meraki AP on an IP / VLAN that has Internet access and make sure that it connects to the cloud FIRST, updates itself, and completes a basic configuration. I noticed that if I did not let the AP connect to the cloud “controller” first to sync it’s config, it would not build a LAG and bring up both interfaces in the EtherChannel (when checking status on the switch side).

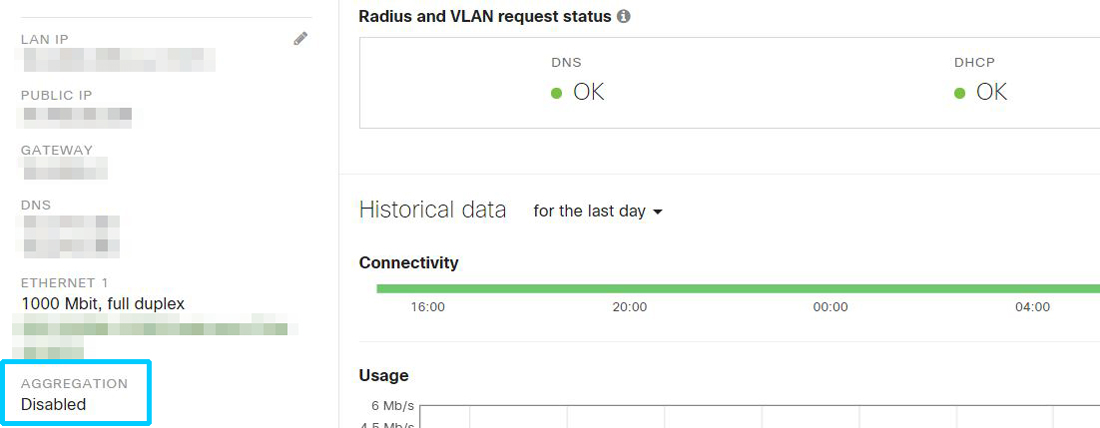

Here’s what your Meraki Dashboard may display with a single uplink for network access. Pay particular attention to the “Aggregation” section.

Cisco Catalyst configuration to get things going.

Lets take a look at the Catalyst 3850 switch side, here’s the configuration and status when the AP is unwilling to setup a LAG connection. This particular switch (WS-C3850-48P) is running IOS-XE 03.06.05E. We’re using PortChannel interface 100 which bundles two physical gigabit copper ports G2/0/29 and G2/0/40. Your native VLAN will probably be different, but should be whatever you use in your environment (assuming that you need trunking for multiple VLANs).

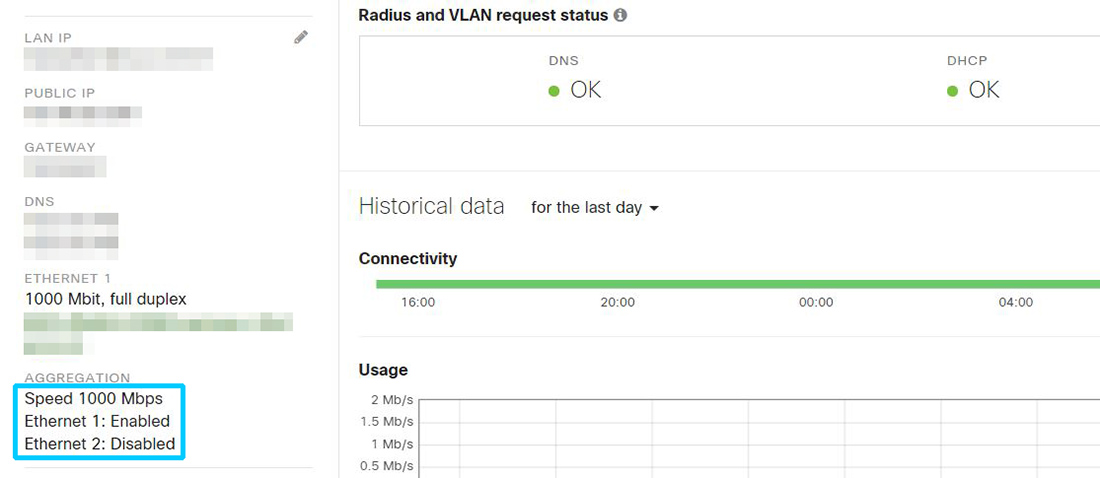

This is what the status of your EtherChannel will be if you pull a Meraki MR out of the box, hook it up to two interfaces bundled in an EtherChannel and expect it to setup Aggregation on it’s side. Notice the two physical interfaces in “s” or suspended state. I also tried changing the port type to access ports in a regular data VLAN with Internet access, but I did not make a difference.

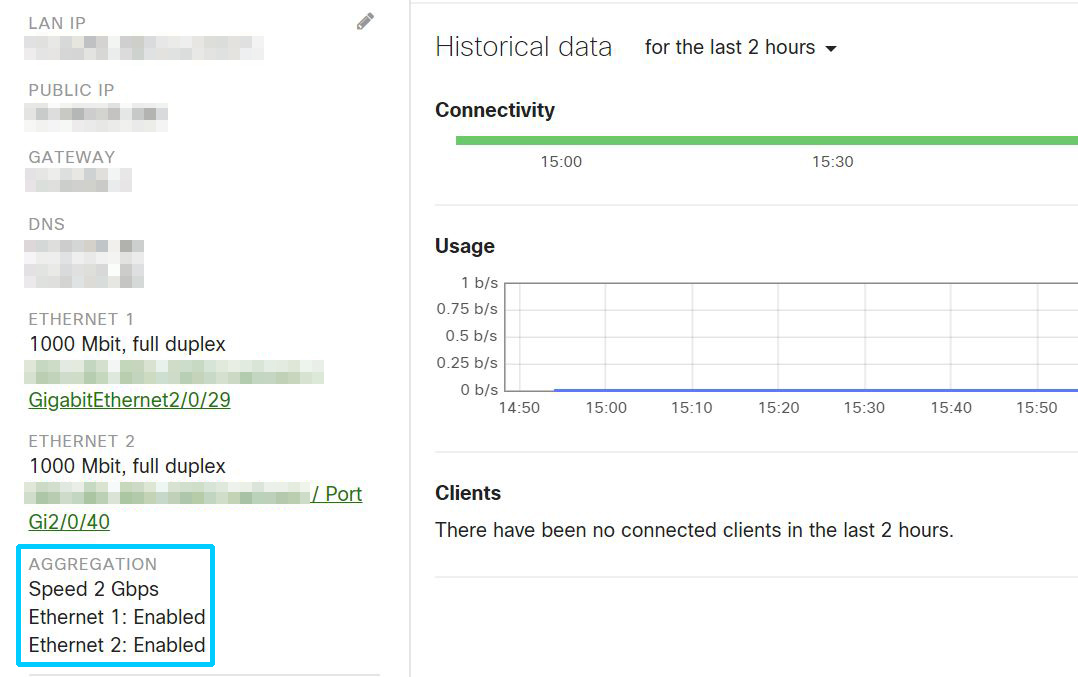

What does this look like when its actually working?

Temporarily connect the Meraki MR to a regular access switchport somewhere, let it get online and synchronize with Meraki Dashboard and after a few minutes physically connect it back to the EtherChannel interfaces. The status on the switch side should look a bit more normal, and you can verify Aggregation is working on the Meraki AP status page.

I don’t think this is specific to just 3850’s either, I noticed similar behavior on Catalyst 9300’s as well. Perhaps this is more plug and play with Meraki MS switches, but I didn’t have any available to lab this setup with. Hope this helps some people out there looking to deploy Meraki AP’s with Cisco Catalyst switches!

Leave A Comment